Comparing Agents

Run the same tests against multiple configurations and see the results side by side. Use it to pick between candidates, or to check that one agent is ready to ship across the scenarios and personalities you care about.

Comparing Agent PerformanceTwo modes

When you start a new comparison you pick one of two modes. The mode determines what the setup form asks for and how the results are laid out.

- Compare agents - run the same scenarios against two or more agents (or agent plus personality combinations) and see a side-by-side table with a baseline and deltas. Use this when A/B testing models or prompts.

- Readiness report - run one agent across scenarios and personalities, organized into groups, and get a pass-rate verdict per group. Use this before shipping, or as a coverage audit across different customer segments.

Compare agents

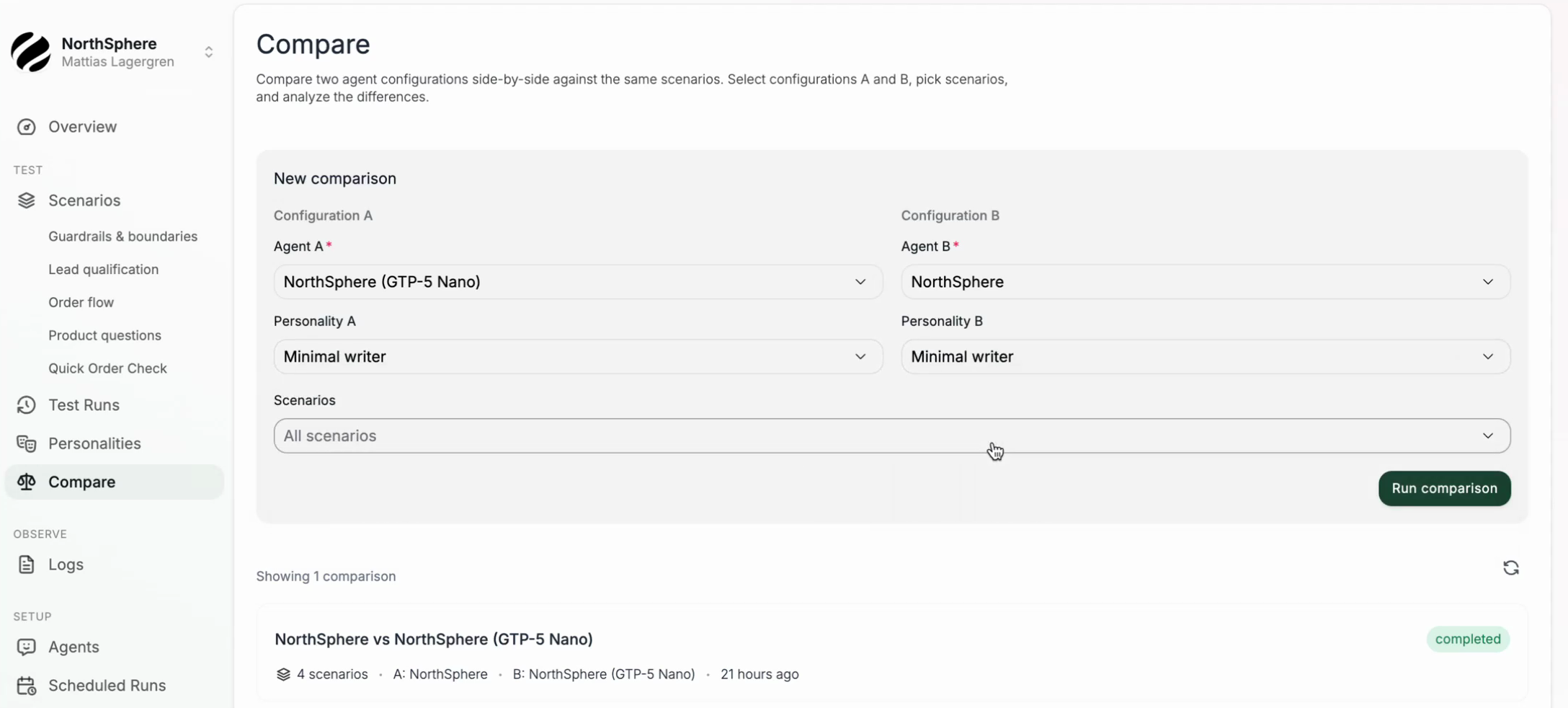

Pick two or more agent configurations. Each configuration is an agent plus an optional personality. Then pick the scenarios to run.

- Go to the Compare page in the left sidebar and click New comparison.

- Choose Compare agents.

- Add at least two configurations. Mix agents, personalities, or both - each configuration adds a column to the results table.

- Select the scenarios to include.

- Click Run comparison.

Voxli runs each selected scenario against every configuration and generates one test result per pair.

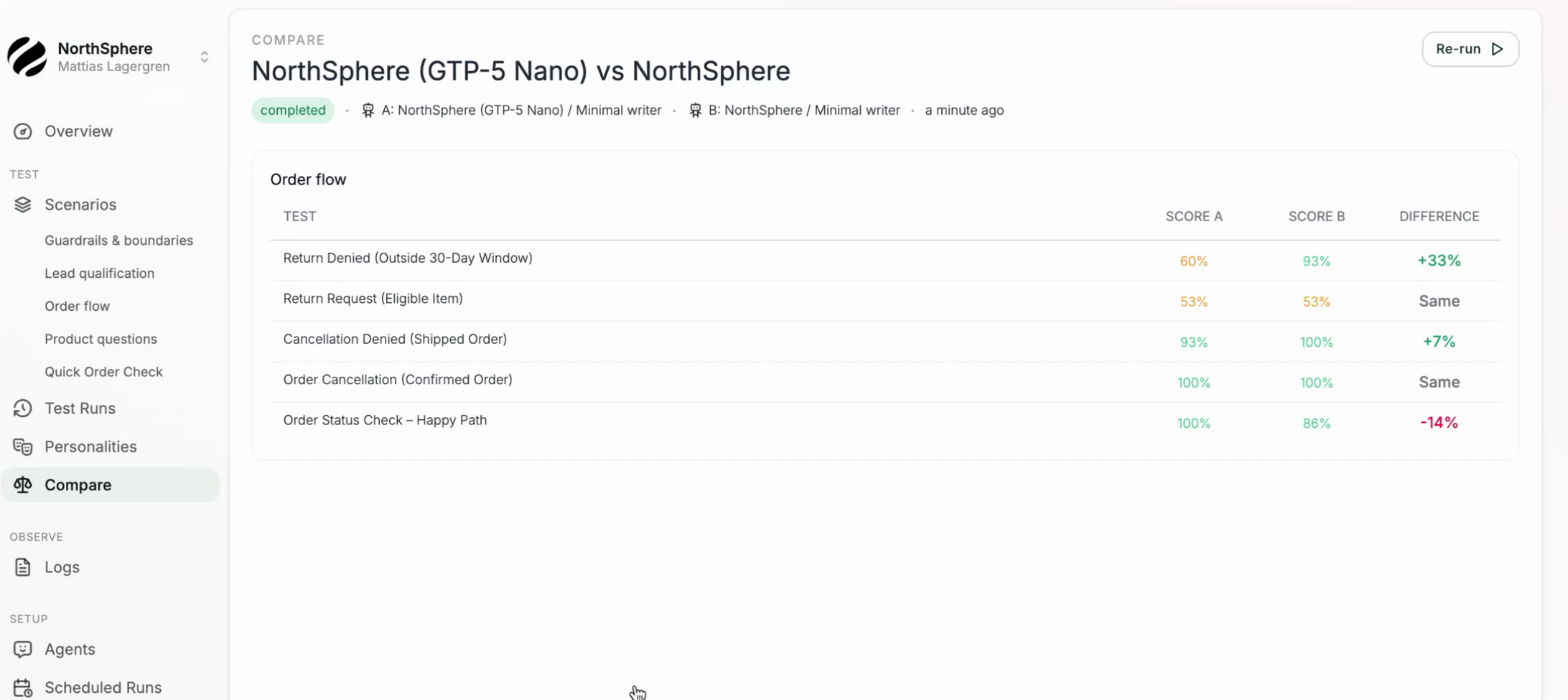

Reading the results

Results are organized per scenario. Each scenario shows a table with one column per configuration plus an average column. Rows can be toggled between scenarios (the default) and individual tests using the Show tests switch.

Click any score cell to open the full test result with the conversation transcript and assertion outcomes.

Additional metrics

Use the Metric selector above the results table to switch the cells between:

- Score - weighted pass rate per test (the default).

- Time - average agent response time per test.

- Tokens - total tokens consumed per test.

- Cost - estimated cost per test.

- Hallucinations - number of unsupported or contradicted claims extracted from the agent’s responses.

Switching the metric changes every cell at once, so you can compare the configurations along one dimension at a time.

Readiness report

A readiness report checks one agent against a structured slice of your coverage. You organize the work into groups, where each group picks a set of scenarios and one or more personalities to run them with. Every scenario in a group is run against every personality in the group. Groups let you tag different slices of the work (for example “New customers” vs “Existing customers”) and see a pass-rate verdict for each.

- Go to the Compare page and click New comparison.

- Choose Readiness report.

- Pick the agent being evaluated.

- Add one or more groups. For each group, give it an optional name and pick the scenarios and personalities it covers.

- Click Run comparison.

Reading the results

The results page opens with an insights row at the top showing the overall pass rate, number of blocker failures, number of warnings, and how many tests have completed so far. Below that, each group is rendered as its own matrix of scenarios and personalities.

- Green pass rates (90% or above) indicate the group is ready to ship.

- Yellow (70-89%) indicates review-worthy issues.

- Red (below 70%, or any blocker failure) indicates a critical problem in that group.

Group names can be edited inline by clicking the title. Use the Show tests switch to expand each scenario row into its individual tests, or the Metric selector to swap the cells between score, time, tokens, cost, and hallucinations.

Re-running comparisons

After making changes to an agent (or the underlying scenarios) you can re-run an existing comparison to get fresh results. This creates a new comparison with the same configuration, so you can track progress over time.

What’s next

- Agents - learn about the different agent types and how to connect them.

- Personalities - shape how the simulated user talks across groups or configurations.