Introduction

Voxli is an automated testing platform for conversational AI agents. It simulates multi-turn conversations against your agent and scores conversations on correctness, tone, tool usage, and more - so you catch regressions before your users do.

What Voxli tests

You define scenarios with assertions. Voxli plays the user, talks to your agent, and evaluates each response. Out of the box you can assert on:

- Answer correctness - is the response accurate given your knowledge base or expected output?

- Tone & style - does the agent stay on-brand, polite, or within your guidelines?

- Tool calling - did the agent call the right tools, in the right order, with the right parameters?

- Conversation flow - does the agent handle multi-turn context, handoffs, and edge cases properly?

Scenarios can run on demand, on a schedule, or triggered on push via GitHub Actions.

How it works

Voxli drives a conversation loop: it sends a user message to your agent, receives the response. Voxli then evaluates the conversation against your assertions, then continues the conversation until the scenario completes.

There are two ways to integrate.

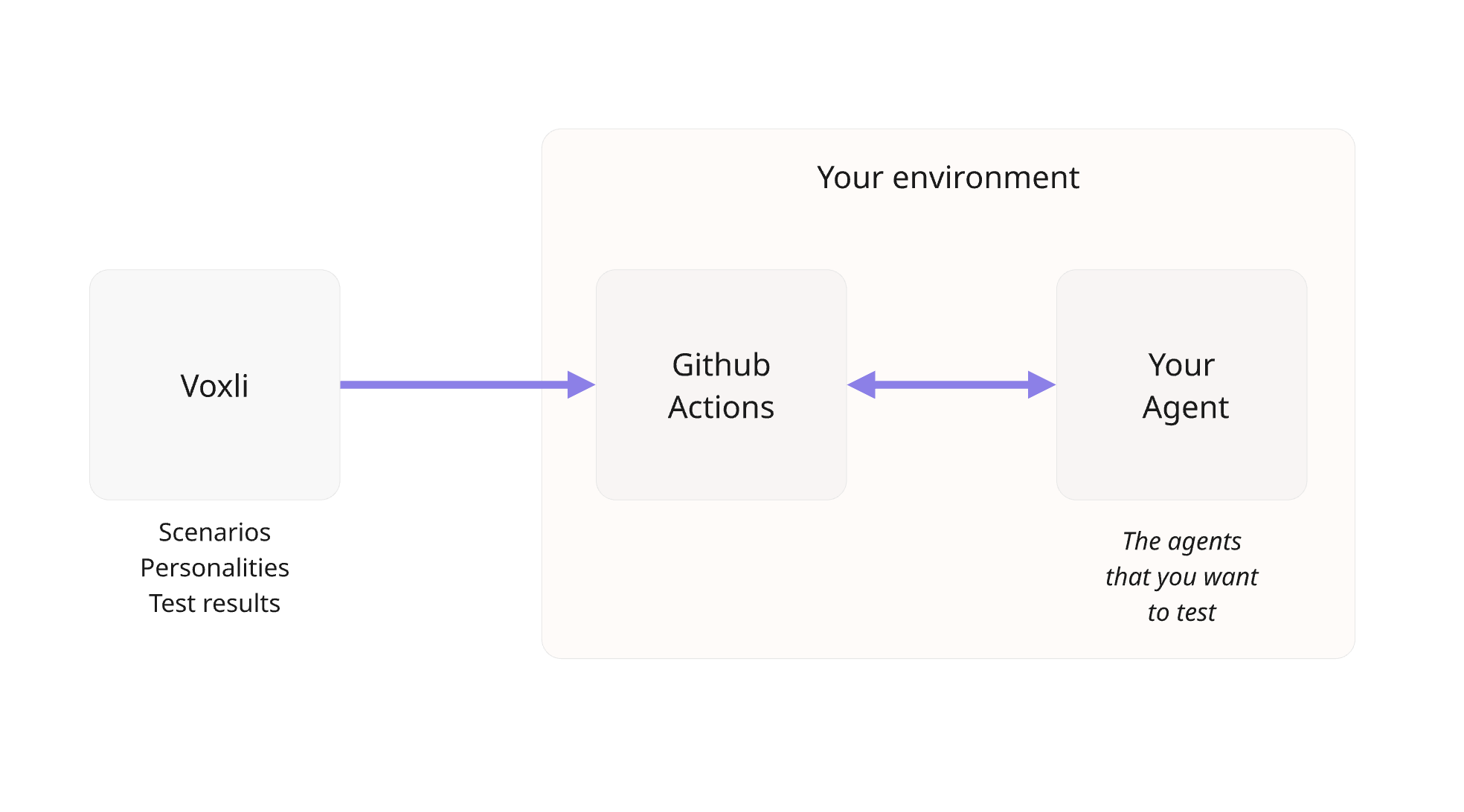

GitHub Mode

Voxli triggers a GitHub Actions workflow in your repository. The workflow runs the conversation loop between Voxli and your agent.

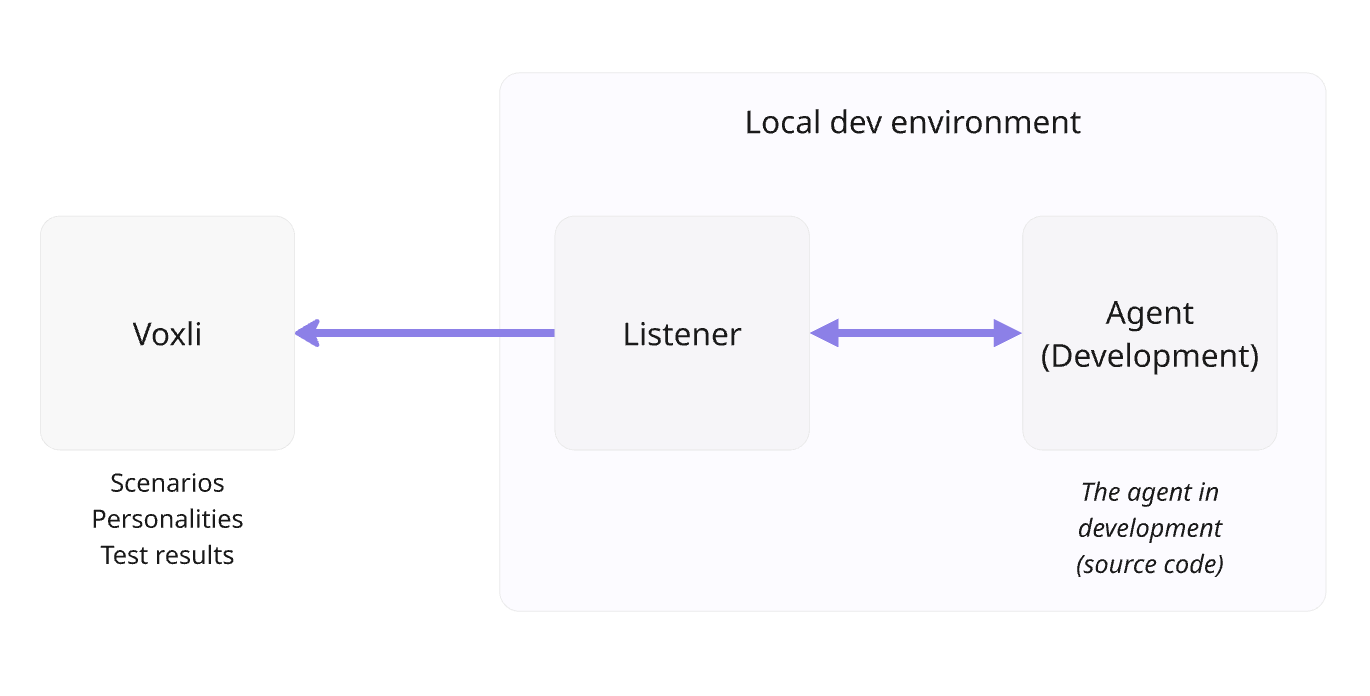

Local Mode

You run a script locally that calls the Voxli API directly to drive the conversation loop. Best for quick iteration during development.

Both modes score automatically when each conversation ends. See API Overview for authentication and setup, or GitHub for the full GitHub workflow guide.